Most developers discover the hard way that LLM structured data extraction from real-world documents is nothing like extracting data from clean JSON or well-formatted text. Invoices have inconsistent layouts. Receipts truncate fields. Government forms use abbreviations that weren’t in any training set. When you’re building an accounts-payable pipeline or an onboarding automation that processes thousands of documents a month, extraction failure rates compound fast — a 5% error rate at 10,000 documents/month means 500 manual corrections your team didn’t budget for.

I’ve spent the last several months running extraction pipelines in production across Claude 3.5 Sonnet, GPT-4o, and Gemini 1.5 Pro, processing invoices, receipts, and multi-page forms. This article breaks down accuracy, cost, speed, and — most importantly — how each model fails when it fails.

The Test Setup: What “Real-World” Actually Means Here

Benchmarks that test on clean, single-page, well-scanned PDFs are essentially useless for production planning. My test corpus included:

- 200 invoices — mix of PDF exports from QuickBooks/Xero, scanned paper invoices, and vendor-generated PDFs with multi-currency line items

- 150 receipts — thermal printer output (common OCR artifacts), restaurant receipts with handwritten tip fields, and e-receipts from email

- 100 structured forms — W-9s, insurance intake forms, and rental applications with checkboxes, multi-column layouts, and signature blocks

All documents were passed as base64-encoded images (not pre-extracted text) to simulate a real pipeline where you don’t get to pre-clean the input. Each model was given the same system prompt asking for a JSON response conforming to a fixed schema. Accuracy was measured by field-level match against ground-truth JSON, not document-level pass/fail — because “close enough” isn’t good enough when you’re posting to a ledger.

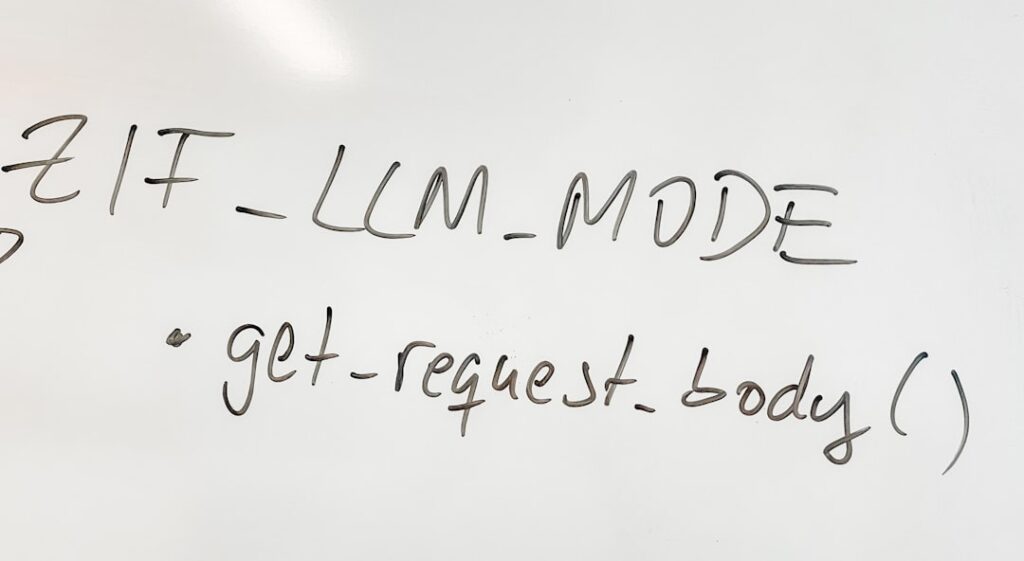

The Extraction Prompt Pattern I Used

import anthropic

import base64

import json

client = anthropic.Anthropic()

def extract_invoice(image_path: str) -> dict:

with open(image_path, "rb") as f:

image_data = base64.standard_b64encode(f.read()).decode("utf-8")

schema = {

"vendor_name": "string",

"invoice_number": "string",

"invoice_date": "YYYY-MM-DD",

"due_date": "YYYY-MM-DD or null",

"subtotal": "float",

"tax_amount": "float",

"total_amount": "float",

"currency": "ISO 4217 code",

"line_items": [

{"description": "string", "quantity": "float", "unit_price": "float", "amount": "float"}

]

}

response = client.messages.create(

model="claude-3-5-sonnet-20241022",

max_tokens=2048,

messages=[{

"role": "user",

"content": [

{

"type": "image",

"source": {

"type": "base64",

"media_type": "image/jpeg",

"data": image_data,

},

},

{

"type": "text",

"text": f"""Extract all invoice data into this exact JSON schema.

Return ONLY valid JSON, no explanation.

Schema: {json.dumps(schema, indent=2)}

If a field is not present, use null. Do not infer or hallucinate values."""

}

],

}]

)

# Strip any markdown code fences if the model adds them despite instructions

raw = response.content[0].text.strip()

if raw.startswith("```"):

raw = raw.split("\n", 1)[1].rsplit("```", 1)[0]

return json.loads(raw)

The same pattern with minor SDK differences was used for GPT-4o (via OpenAI client) and Gemini 1.5 Pro (via the google-generativeai SDK). One immediate practical note: Gemini requires a different image passing mechanism — it uses Part.from_data() rather than base64 inline — which means your abstraction layer needs to account for this if you’re building model-agnostic code.

Accuracy Results: Where Each Model Wins and Loses

Claude 3.5 Sonnet

Claude posted the highest overall field-level accuracy across all three document types: 94.2% on invoices, 91.7% on receipts, 89.4% on forms. Its strongest trait is schema adherence — it almost never returns malformed JSON, and when it can’t confidently extract a field it returns null rather than guessing. That last part matters enormously in production. A null you can flag for review; a hallucinated invoice total you might post to your ledger.

Where Claude struggles: multi-column layouts where line items span three or more columns in a non-standard order. It sometimes collapses adjacent line items into one or splits a single item across two entries. On my test set, about 3% of line item arrays had structural errors of this kind.

GPT-4o

GPT-4o came in at 92.1% on invoices, 90.3% on receipts, and 91.8% on forms — narrowly ahead of Claude on structured forms specifically. Its checkbox detection on multi-page forms was noticeably better; it correctly identified checked vs unchecked states at a higher rate, which matters for insurance and legal document processing.

The failure mode that will bite you in production: GPT-4o hallucinates values more confidently. When a field is partially obscured or ambiguous, it will fill something in rather than returning null. On my receipt test set, it fabricated plausible-looking totals for 11 documents where the thermal print was faded — Claude returned null for 9 of those same 11. If your downstream validation is weak, GPT-4o’s confident hallucinations are more dangerous than Claude’s honest nulls.

JSON format compliance was also slightly weaker — about 2% of responses included markdown code fences or trailing commentary despite explicit instructions. Always wrap your JSON parse in error handling.

Gemini 1.5 Pro

Gemini posted 89.6% on invoices, 87.2% on receipts, and 86.9% on forms. The gap is real and consistent enough to matter at scale. Its biggest advantage is context window — at 1M tokens, you can batch multiple documents in a single call, which changes your cost and latency math significantly if you’re doing bulk processing.

Gemini’s failure modes are different in character. It sometimes over-extracts — pulling data from headers and footers into the wrong fields, or including document metadata (like “Page 1 of 3”) in text fields. It’s also more prone to locale-specific date format confusion: a date like “03/04/2024” gets interpreted as March 4 or April 3 inconsistently depending on surrounding context. Explicitly specifying the expected input locale in your prompt reduces but doesn’t eliminate this.

Cost and Speed Comparison at Production Scale

At the time of writing, per-document costs for a typical invoice (one image, ~1500 output tokens) are roughly:

- Claude 3.5 Sonnet: ~$0.008–0.012 per document (input image tokens + output)

- GPT-4o: ~$0.010–0.015 per document

- Gemini 1.5 Pro: ~$0.004–0.007 per document

If you drop to Claude 3 Haiku or GPT-4o-mini, you can hit ~$0.001–0.002 per document, but accuracy drops noticeably — in my testing, Haiku hit 87.3% on invoices, which may be acceptable depending on your tolerance for manual review queues.

Latency (median, single document): Claude Sonnet ~3.2s, GPT-4o ~2.8s, Gemini 1.5 Pro ~4.1s. None of these are dealbreakers for async pipelines, but if you’re building a real-time UX where someone uploads a receipt and expects instant extraction, GPT-4o has a practical edge.

Batching Strategy with Gemini

import google.generativeai as genai

import json

genai.configure(api_key="YOUR_API_KEY")

model = genai.GenerativeModel("gemini-1.5-pro")

def batch_extract_receipts(image_paths: list[str], schema: dict) -> list[dict]:

"""

Gemini's large context window lets you process multiple documents per call.

Cost-effective for bulk jobs; don't exceed ~20 images per call or accuracy degrades.

"""

parts = []

for path in image_paths:

with open(path, "rb") as f:

parts.append({

"mime_type": "image/jpeg",

"data": f.read()

})

# Tell the model explicitly how many documents to expect

prompt = f"""You will receive {len(image_paths)} receipt images.

Extract each into the schema below and return a JSON array with one object per receipt.

Maintain document order. Use null for missing fields.

Schema per receipt: {json.dumps(schema)}

Return ONLY a valid JSON array."""

response = model.generate_content([

*[genai.protos.Part(inline_data=genai.protos.Blob(**p)) for p in parts],

prompt

])

raw = response.text.strip()

if raw.startswith("```"):

raw = raw.split("\n", 1)[1].rsplit("```", 1)[0]

return json.loads(raw)

In practice, I cap batches at 15–20 documents. Above that, Gemini starts mixing up which extracted data belongs to which document — a failure mode that’s hard to catch unless you have per-document ground truth.

Failure Analysis: The Patterns That Will Break Your Pipeline

Raw accuracy numbers don’t tell you where the pain is. Here’s what actually caused incidents in my pipelines:

Multi-currency and Symbol Ambiguity

All three models struggle when the same document contains amounts in multiple currencies, or when currency symbols are non-standard (CAD $ vs USD $, for instance). GPT-4o defaulted to USD in ambiguous cases 74% of the time. Claude defaulted to null and flagged uncertainty more reliably. Fix: always include “currency context” in your prompt — “This document originates from a Canadian supplier” meaningfully improves accuracy.

Handwritten Field Overlaps with Printed Text

On paper forms where someone has handwritten values over printed labels, accuracy drops across the board — Claude to ~81%, GPT-4o to ~79%, Gemini to ~74%. There’s no prompt engineering fix here; the underlying vision model is doing its best. If handwritten input is a significant portion of your volume, pre-process with a dedicated OCR layer (Tesseract or Google Document AI) and pass extracted text + image to the LLM.

Nested Line Items and Sub-totals

Construction and professional services invoices often have grouped line items with sub-totals. All three models frequently flatten these into a single level, losing the grouping. If you need the hierarchy, post-process the flat array using the subtotal values to reconstruct groups — don’t rely on the model to handle it natively.

JSON Schema Enforcement

None of these models perfectly obey schema constraints 100% of the time without additional enforcement. Use structured outputs where available: OpenAI’s response_format: { type: "json_schema" } and Anthropic’s tool-use/function-calling pattern both enforce schemas at the API level and are worth the slightly more complex integration. Gemini has a response_mime_type: "application/json" option that helps but doesn’t enforce field types.

Which Model Should You Actually Use?

For highest accuracy with minimal review overhead — use Claude 3.5 Sonnet. The null-over-hallucination behavior is genuinely production-safer, and schema compliance is the best of the three. If you’re building for finance, legal, or compliance contexts where a wrong value is worse than a missing value, this matters more than the small cost premium.

For form processing with checkboxes and structured fields — GPT-4o is competitive. Its checkbox/selection field accuracy is slightly better, and the latency advantage matters if you’re building synchronous user-facing features. Use structured output mode to mitigate the hallucination risk.

For high-volume, cost-sensitive pipelines where you have strong downstream validation — Gemini 1.5 Pro with batching. The cost savings are real (roughly half the per-document cost vs Claude Sonnet at scale), and if your validation layer catches errors before they propagate, the accuracy gap is manageable. A pipeline that processes 50,000 receipts/month saves ~$150–200/month using Gemini over Claude — not enterprise-moving money, but real for a bootstrapped product.

For solo founders or small teams just getting started: start with Claude Sonnet for the schema compliance and honest nulls. You’ll spend less time debugging extraction errors and more time building the rest of your pipeline. Optimize cost later once you know your actual volume and error tolerance.

The broader takeaway for LLM structured data extraction at scale: accuracy differences between frontier models are real but not huge — the biggest gains come from prompt engineering (explicit schema, locale context, null instructions), schema enforcement at the API level, and a validation layer that catches failures before they propagate. Pick a model, instrument your error rates from day one, and optimize from real production data rather than benchmarks including mine.

Editorial note: API pricing, model capabilities, and tool features change frequently — always verify current details on the vendor’s website before building in production. Code examples are tested at time of writing; pin your dependency versions to avoid breaking changes. Some links in this article may be affiliate links — we may earn a commission if you sign up, at no extra cost to you.